Tableau runs R scripts using RServe, a free, open-source R package. But if you have a large number of users on Tableau Server and use R scripts heavily, pointing Tableau to a single RServe instance may not be sufficient.

Luckily you can use a load-balancer to distribute the load across multiple RServe instances without having to invest in a commercial R distribution. In this blog post, I will show you, how you can achieve this using another open source project called HAProxy.

Let’s start by installing HAProxy.

On Mac you can do this by running the following commands in the terminal

ruby -e "$(curl -fsSL https://raw.githubusercontent.com/Homebrew/install/master/install)"

Followed by

brew install haproxy

Create the config file that contains pointers to the Rserve instances.

In this case I created in the folder ‘/usr/local/Cellar/haproxy/’ but it could have been some other folder.

global

daemon

maxconn 256

defaults

mode http

timeout connect 5000ms

timeout client 50000ms

timeout server 50000ms

listen stats

bind :8080

mode http

stats enable

stats realm Haproxy\ Statistics

stats uri /haproxy_stats

stats hide-version

stats auth admin:admin@rserve

frontend rserve_frontend

bind *:80

mode tcp

timeout client 1m

default_backend rserve_backend

backend rserve_backend

mode tcp

option log-health-checks

option redispatch

balance roundrobin

timeout connect 10s

timeout server 1m

server rserve1 localhost:6311 check maxconn 32

server rserve2 anotherserver.abc.lan:6311 check maxconn 32

The highlights in the config file are the timeouts, max connections allowed for each Rserve instance, host:port for Rserve instances, load balancer listening on port 80, balancing being done using roundrobin method, server stats page configured on port 8080 and username and password for accessing the stats page. I used a very basic configuration but HAProxy documentation has detailed info on all the options.

Let’s check if config file is valid and we don’t have any typos etc.

BBERAN-MAC:~ bberan$ haproxy -f /usr/local/Cellar/haproxy/haproxy.cfg -c Configuration file is valid

Now you can start HAproxy by passing a pointer to the config file as shown below:

sudo haproxy -f /usr/local/Cellar/haproxy/haproxy.cfg

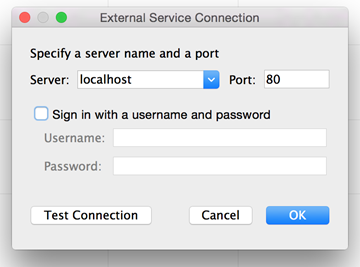

Let’s launch Tableau and enter the host and port number for the load balancer instead of an actual RServe instance.

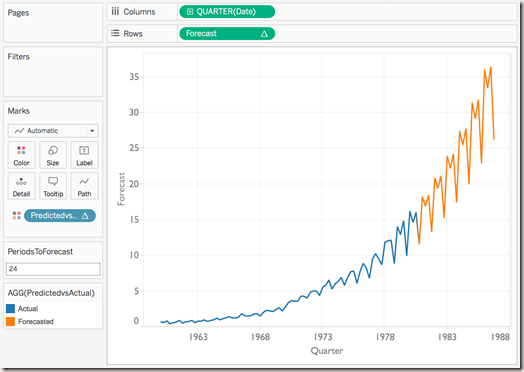

Success!! I can see the results from R’s forecasting package in Tableau through the load balancer we just configured.

Let’s run the calculation one more time.

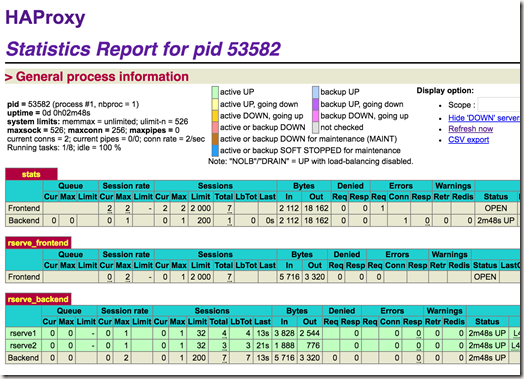

Now let’s look at the stats page for our HAProxy instance. In this case per our configuration file by navigating to http://localhost:8080/haproxy_stats.

I can see the two requests I made and that they ended up being evaluated on different RServe instances as expected since round-robin load balancing forwards a client request to each server in turn.

Now let’s install it on a server that is more likely to be used in production and have it start up automatically etc.

I used a Linux machine (Ubuntu 14.04 specifically) for this. There are only a few small differences in the configuration steps. To install HAProxy, in a terminal window enter :

apt-get install haproxy

Now edit the haproxy file under the directory /etc/default/ and set ENABLED=1. This is by default 0. Setting to 1 will run HAProxy automatically when the machine starts.

Now let’s edit the config file which can be found here /etc/haproxy/haproxy.cfg to match the config example above.

And we’re ready to start the load balancer:

sudo service haproxy start

Now you can serve many more visualizations containing R scripts to a larger number of Tableau users. Depending on the amount of load you’re dealing with, you can start with running multiple RServe processes on different ports of the same machine or you can add more machines to scale out further.

Time to put advanced analytics dashboards on more screens 🙂

Hello Bora,

Thank you for your post. I want to know if we can use a load balancer with windows, HAProxy is not available on windows.

Hi Melanie,

You may want to try nginx or rinetd. If I can find time, I want to put together an example using nginx.

~Bora

Great article. Any general high level guidance on how much load an RServe can take?

Thank you for your help

It all depends on how complex the algorithm is and how much data there is. If there is more traffic than it can handle, it will queue up the requests. In terms of how much each request can be, it will give up where R would give up in terms of memory limits.

Loved your article. Thank you…

When you have multiple users connecting, is there a way to kill a request in RServ that is running for several mins without affecting other requests? Possible?

Thx

Unfortunately, there is no signal you can send to Rserve to halt processing. You need to find the processid and kill it through standard operating system methods.

Thank you for the response. Per your suggestion, when we kill the process, does it kill the one that is taking the long time without affecting other R process that is running?

Thx

Thank you for the good article. Does R (Rserv) run jobs in parallel. For example, if there are 3 users running clustering request, does all 3 run in parallel or does it get queued up and one job gets executed and completed before the next job starts?

Thank you…

It is a matter of how many Rserve processes you have running. If you have only one, it will queue up. On Windows you’ll have 1 process unless you explicitly start multiple. On Linux/Unix/Mac system will automatically fork a new process when new requests come so you can handle things in parallel.

Hi Bora,

I’m hoping you can assist me. I am looking to do some sentiment analysis in Tableau using R. However, I have my own list of keywords that I want to use to perform this sentiment analysis. I noticed that the Rstem packages don’t seem to be available in newer versions of R. Is there an older version I should use? Is it possible to use my own list of words for this analysis? Thanks in advance for your help